A simple text prompt and a sample of a major politician’s voice is all it takes to create convincing deepfake audio with artificial intelligence.

That could spread damaging misinformation during elections due to “insufficient” or “nonexistent” safety measures, according to a new study released Friday.

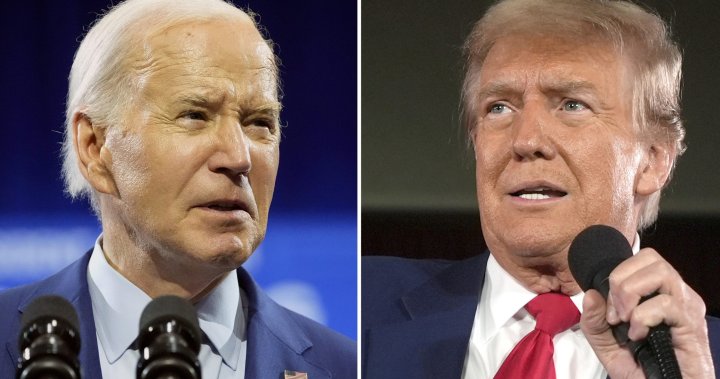

Researchers at the Center for Countering Digital Hate tested six of the most popular online AI voice-cloning tools to determine how easy it is to create misleading audio of politicians like U.S. President Joe Biden, former president and current Republican candidate Donald Trump, British Prime Minister Rishi Sunak and others.

Five of the six tools tested failed to prevent the generation of those voice clips, most of which were deemed convincing. Researchers were also able to get around safety features on some platforms by simply using a different, less restrictive AI voice-cloning tool.

The generated clips included fake audio of Biden, Trump, Sunak and other political figures falsely urging people not to vote due to bomb threats at polling stations, claiming their votes are being counted twice, admitting to lying or discussing medical issues.

“We’ve shown that these platforms can be abused all too easily,” said Imran Ahmed, CEO of the Center for Countering Digital Hate, in an interview with Global News.

LISTEN BELOW: An AI-generated clone of Trump’s voice created by Speechify appears to portray Trump admitting to lying in order to get elected. (Audio provided by the Center for Countering Digital Hate)

The report comes ahead of consequential elections this year in the United Kingdom — which goes to the polls in just over a month — the United States and several other democracies around the world.

Canada is due to have a federal election no later than October 2025.

The Canadian Security Intelligence Service, the U.S. Department of Homeland Security and other world bodies have determined AI-generated deepfakes to be a threat to democracy.

Last year, Canada’s Communications Security Establishment warned in a report that generative AI will “pollute” upcoming election campaigns, including in Canada.

The Organization for Economic Co-operation and Development (OECD)’s AI Incidents Monitor, which tracks cases of AI-generated content around the world, found a 679-per cent jump year-over-year since March 2023 in the number of incidents involving human voices.

Researchers tested AI voice-cloning tools from ElevenLabs, Speechify, PlayHT, Descript, Invideo AI and Veed by uploading two-minute-long audio samples of the politicians speaking edited from downloaded publicly available videos.

Beyond Biden, Trump and Sunak, the study also used the voices of U.S. Vice President Kamala Harris, British Labour Party leader Keir Starmer, French President Emmanuel Macron, European Commission President Ursula von der Leyen and European Internal Market Commissioner Thierry Breton.

The email you need for the day’s

top news stories from Canada and around the world.

The researchers then fed the voice-cloning tool the same five sentence-long text prompts for each voice, for a total of 40 tests for each tool.

All six platforms have paid subscriber plans, but also include a free option that allows users to generate a short, one-time clip at no cost.

LISTEN BELOW: An AI-generated clone of Harris’ voice created by Speechify appears to portray Harris discussing a medical issue. (Audio provided by the Center for Countering Digital Hate)

Of the six tools, the report found only ElevenLabs had what researchers deemed sufficient safety measures — which the company calls “no-go voices” — that immediately blocked the creation of false audio clips of Biden, Trump, Harris, Sunak and Starmer. But the same platform failed to recognize the European politicians’ voices as ones that should also be barred, and created 14 convincing audio clips, which the report dubs a “safety failure.”

Aleksandra Pedraszewska, ElevenLabs’ head of AI safety, told Global News in a statement the company is “constantly improving the capabilities of our safeguards, including the no-go voices feature.”

The company says it is currently evaluating additional voices to add to its “no-go voices” list and is “working to expand this safeguard to other languages and election cycles.”

“We hope other audio AI platforms follow this lead and roll-out similar measures without delay,” Pedraszewska said.

LISTEN BELOW: An AI-generated clone of Macron’s voice created by ElevenLabs appears to portray Macron admitting to stealing campaign funds. (Audio provided by the Center for Countering Digital Hate)

Three other platforms tested — Descript, Invideo and Veed — contain a feature that requires users to actually upload audio of a person saying the specific statement they want cloned before generating the desired clip. But researchers found they could bypass that safeguard through a “jailbreaking” technique by generating the statement with a different AI cloning tool that did not have the same feature. The resulting clip was convincing enough to fool those three platforms.

Researchers also found Invideo went further than simply generating a clip of the statement fed to the cloning tool, and would auto-generate full speeches filled with disinformation.

“The voice even incorporated Biden’s characteristic speech patterns,” the report says.

LISTEN BELOW: An AI-generated clone of Biden’s voice created by Descript appears to portray Biden warning of bomb threats at polling stations in the U.S., which requires delaying the election. (Audio provided by the Center for Countering Digital Hate)

LISTEN BELOW: An AI-generated clone of Biden’s voice created by Invideo AI that was fed the same text prompt about bomb threats, but generated a nearly minute-long speech on the subject that included Biden’s habitual stumbling over words. (Audio provided by the Center for Countering Digital Hate)

Global News reached out to all six companies for comment on the report’s findings and recommendations, but only ElevenLabs and Invideo responded by deadline.

A spokesperson for Invideo AI said it requires consent from people whose voices are cloned, but did not respond by deadline to the report’s findings that the safeguard can be avoided.

The report notes only ElevenLabs and Veed include specific prohibitions in their user policies against creating content that can influence elections. All but PlayHT explicitly prohibit the creation of “misleading” content, and only PlayHT and Invideo do not have language that bars users from nonconsensual cloning or impersonation of voices.

The Center for Countering Digital Hate is urging AI and social media platforms to quickly adopt guardrails to prevent the spreading of misinformation with content created with their tools. Those include so-called “break glass” measures to detect and remove false or misleading content. The report also calls for industry standards for AI safety and updated election laws to address AI-generated misinformation.

“We need (these measures) quickly because this is an emergency,” Ahmed said.

Several countries have already seen high-profile examples of misinformation fueled by AI-generated content.

In January, voters in New Hampshire received a robocall that appeared to be a recording of Biden urging them not to vote in that state’s Democratic primary.

State prosecutors investigating the incident have confirmed the audio was an AI deepfake, and a Democratic consultant aligned with a rival campaign is facing criminal charges.

Deepfake audio clips posted online last year falsely appeared to capture Starmer, the leading candidate for British prime minister in July’s election, abusing Labour Party staffers and criticizing the city of Liverpool.

During the recent election campaign last fall in Slovakia — where newly-elected Prime Minister Robert Fico survived an assassination attempt earlier this month — an audio clip went viral that allegedly captured liberal party leader Michal Šimečka discussing how to rig the vote with a journalist. The audio was later determined to have been created with AI.